The word ‘platform’ is starting to reach the same status as the word ‘internet’ – part of the bedrock, but many have no idea what it really is. In e-health particularly, ‘platform’ is often mixed up with ‘open source’, ‘APIs’ and ‘standards’ in ways that don’t always make sense. Regardless, public policy in the NHS, US ONC and in other countries is being formulated without necessarily a clear common understanding. I’m going to try to address some of the ambiguities here.

The key thing to understand about a platform is that it represents progress away from being locked-in to a monolith of fixed commitments, toward an open ecosystem. This is true both technologically and economically. Any platform operates in two environments: development, and deployment, and these need to be understood distinctly to understand how to use the platform concept properly.

Before going further, I will point readers to Ewan Davis’ blog post on the same topic, in which he articulates some of the same conclusions as I do here, but via different reasoning – particularly about the difference between open source and open standards. Well worth a read.

For some more general thoughts on what a platform is, see this 2007 post by Marc Andreessen (the original Mosaic/Netscape developer). His point of view is more at a developer’s level, and essentially says: if it doesn’t have open interfaces, it’s not a platform.

Automotive Platforms

Building things can be done in two paradigmatic ways: with re-use and without. With no re-use, every time you build a new thing, you have to engineer all of its parts de novo. The result is (hopefully) exactly what you want, but with minimal economy of scale. The earliest cars were all custom built models, with each model being its own thing. If you have ever admired a 1931 Packard ‘gangster’ saloon or an early Deusenberg or Mercedes Benz, this seems no bad thing, until you realise that as a seller, you can’t access the broad consumer market, due to your prices. Even when Ford invented the production line, the cars were still largely custom across models – he had just worked out how to mass-produce each model.

1931 Packard 833

In more recent times, auto manufacturers have developed platforms, commonly re-used across companies and continents. The wikipedia auto platform page gives a good overview.. Why did they do this? Because the ability to re-use existing components enables far greater flexibility – a new model is no longer a complete new design – and greatly reduces costs.

What did they develop? A platform in auto manufacturing is a set of standard components that forms a core part of many specific models of a car. The chassis, drive-train, and motor are usually key parts of the ‘platform’. This platform can be reused to create multiple different models. Each of those models has other components that can assume the shape and functionality of the ‘platform’ components. This means that the platform components have a ‘specified interface’ that can be assumed across the company. For all cars built on this platform, the model-specific components can be built assuming this interface, which means they can be built in parallel, and by another part of the company, or even by another company altogether.

Principle: platform defining characteristic = publicly exposed interface(s)

Corollory: a platform divides component builders into platform-based developers and platform implementers

Platforms in IT

In IT, a platform is the same, but with some nuances. The ‘interfaces’ are software interfaces, often known as Application Programming Interfaces, or APIs. A software interface consists of 3 key things:

- the ‘computational’ view – what functions & procedures can be called in the interface? E.g. create_patient, update_patient

- the ‘informational’ view – functions return data, and both functions and procedures take data as arguments. For the API to be fully specified, these arguments and returns need to be specified too

- a protocol specification – what order API calls need to be made, how exceptions are reported and so on.

We tend to talk of ‘standard APIs’ and ‘data standards’ to encapsulate the first two. Platform users and implementers are often categorised in IT as ‘front-end’ and ‘back-end’ – i.e. components that use the platform, and components that implement the platform. In fact there can be platform APIs defined at many levels, so it can be relative as to what is a back-end and what a front-end. Nevertheless, a common situation is that a back-end is often a data repository or information-related service of some kind, and the front-end is a business-oriented application of some kind.

How do platforms work in IT? Consider Amazon, Apple and Google. Apple and Google have developed various technical platforms, based around the mobile operating systems IOS (Apple) and Android (Google). Some parts of these platforms are usable completely separately from mobile devices, e.g. Google Apps, Protocol Buffers etc. They use these platforms internally, in the same way as an auto manufacturer, but more importantly they publish them. Via the published platform, 3rd party developers can build applications that use these platform definitions, and assume that those applications will in fact work on the deployed platform. Amazon, Apple and Google implement the platform themselves. What Amazon, Apple and Google are doing here is a kind of standards-based procurement, where they control and publish the standard, but leave it to 3rd parties to build the applications.

The reason Amazon implements the platform is because it wants to provide, run and control the production deployment – this is their business – a global online shopping mall. They have added apps to the shop, for which they take a 30% cut.

The Apple IOS and Google Android platforms are somewhat different – the are produced by their respective companies, but execute not on the cloud, but inside mobile devices. Apple’s business model is easy to understand – they take a sizeable cut of every app bought from the Apple iStore, as Amazon does. The more apps, the more they make. Google’s case is more diffuse – it mostly makes money from people using Google services like search and Adsense on Android. These services exist on iOS and other phone operating systems as well, but Android is slowly becoming more optimised to connect to primarily to Google’s main cloud services.

It should be clear by now that I am using the term ‘platform’ to mean ‘services platform’, rather than some other ambiguous concept like ‘product platform’. The latter is almost always a private set of interface specifications relating to a piece of private IP, that may be subsequently published in some way. It may be as a trojan horse ‘platform’ to which other companies are pressurised to comply to, or it may be the product is indeed best of breed and in fact has the best de facto starting point for an industry platform.

Platform Ecosystem Roles

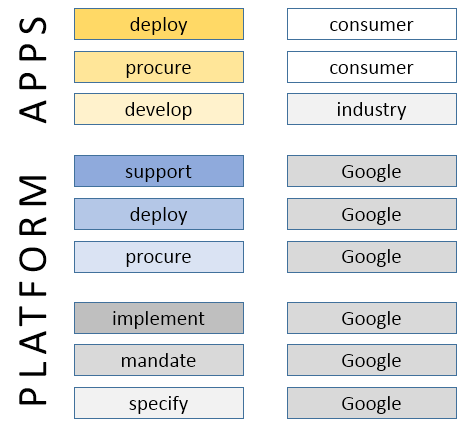

To understand what is going on with the various aspects of a platform, it helps to tease out the various roles being played. Not all of them are obvious with the tech giant examples, because the same big company plays most of them. But it isn’t necessarily that way. Here are the key roles.

- platform specifier – defines and publishes the platform specification

- platform mandater – mandates the use of the platform specification in the target ecosystem

- platform implementer – implements the platform (i.e. back-end(s))

- platform procurer – pays for platform production deployments

- platform deployer – actually deploys the platform implementation

- app developer – develops apps that talk to the platform

- app procurer – buys the apps

- app deployer – runs the apps

We can picture this break-down with respect to Google’s Android platform.

Remember that within this schema, the ‘platform’ may technically consist of many interfaces at many levels, and that what we call an ‘app’ here for convenience’ sake may be someone else’s platform service.

The Platform Concept for Health IT

In the above case, Google performs essentially all the platform-related roles. However, for a public health body such as a MoH, DoH or NHS that wants to create a platform based health IT solution economy, the picture is more diverse.

In this scenario, the jurisdictional body (NHS, MoH, or other) plays only one role, while the provider organisation (e.g. a hospital trust) buys and deploys both back-end and front end components. Different parts of industry do the investing and implementing. How can this work? The ‘mandater’ role is the key – it is the catalyst that makes the whole reaction work, the keystone in the arch. Why? Because building platform takes time and money, and requires up-front investment. For industry to have the confidence to do this, they need to be able to rely on what the mandater commits to. The mandater commits them to build things according to certain specifications, but it also commits buyers – provider organisations in this case – to base procurement on the same specifications.

We can go further and say that the presence of quality specifications, and a credible mandater will actually unlock the whole industry and convert it to a new form – a commercially heterogeneous ecosystem of app builders and platform implementers. The platform definition acts as the common point of agreement that enables multiple companies to build technically compatible components, without any specific peer-peer agreements. What is required are the following things:

- confidence in the platform specifier – this allows implementers to invest in developing apps or back-ends, safe in the knowledge that the specification will be maintained, will evolve in controlled ways, and will be largely stable.

- confidence in the platform mandater – this allows implementers to know that the party that makes the rules will stick to its word, and manage the platform space properly

- confidence in the platform deployer – this allows implementers to invest, knowing that there will be somewhere to actually run their components when they are built

- confidence in the business model – this allows implementers to see how their investment will be recouped over time – by charging for apps, by receiving advertising income, by being paid

Based on the above, I would offer the following definitions for the platform concept:

platform: a set of technical specification forming a coherent definition of the interface between separately purchasable components

platform ecosystem: a commercial environment in which there is a reliable platform definition and mandater, and a functioning market for platform-based components

A Platform for a Health Service

Let’s consider more closely the platform concept for bodies like a Ministry of Health or the UK NHS. I would argue that the heath service’s primary interest in a platform is to give providers a way to avoid procuring massively expensive, inflexible lock-in solutions, and instead procure incrementally, openly and adaptively.

There are good reasons to do this. One reason is that no single company – not even a GE or an IBM – can do everything (although some well-known US vendors may not agree). Not only that, but many of the best innovations routinely come from small companies focussed on a specific problem, e.g. secondary analytics or computerised guidelines. With no reliable platform, those small companies typically implement poor quality data platform components that are not scalable or clinically safe, or else they sell their solution into a private ecosystem, e.g. Microsoft HealthVault, or else are simply bought by a much bigger vendor.

A second reason why even big vendors are now interested in platforms is that in a world where the data are now recognised to hold the main business and consumer value, there is no escape from the need to work with data partners and to reduce obstacles to data coming into your computing environment. That’s a complete reversal of priority for some vendors. Two new consortia would seem to bear this out – the Healthcare Services Platform Consortium, set up by Harris, Intermountain and Cerner, and the CommonWell Alliance, set up by various large provider organisations, both specifically around the platform concept.

The general situation for procuring providers can be greatly improved in a platform environment. Today, they are still mostly obliged to procure monolithic single-vendor solutions, which cost inordinate amounts of money, and deprive them of any influence or control in the long run – they end up as investors in the monolithic solution vendor’s technology, not their own needs. In a platform economy, this is turned on its head – providers have the power to purchase things that will work together, and to do so incrementally.

It should be clear that a non-platform based economy is not in the wider interests of the health service or the citizen in general. It is also economically extremely inefficient. In a recession-riven world, this is not an abstract consideration.

The Importance of a Quality Platform

None of this comes for free. Not only does it rely on a credible platform mandater, but also on a technically viable platform definition(s). The latter is often thought to be the same as ‘published specifications’ from Standards Development Organisations (SDOs). Nothing could be more mistaken.

Remember that any platform we might want in health has to solve at least key parts of the health information challenges we know so well:

- interoperability – sharing data

- semantic computability – merging and computing (inferencing) with data

- flexibility & adaptability over time – being able to keep systems up to date with requirements

- economic implementability – having affordable and efficient implementation pathways

- clinical relevance – maintaining a strong connection between system semantics and their users needs

It’s not my intention to go into the features of a good platform here, but rather to point to two crucial aspects that will make or break the creation or adoption of a platform in any domain space.

We don’t just need ‘standards’, we need a Standards Factory

There is a naive view in e-health that SDO-issued standards can be adopted by a country, perhaps with some ‘profiling’ and then unleashed on industry. Experience in various e-health programmes has shown that this doesn’t work. Instead, what is needed is a ‘standards factory’ mentality, whereby a national programme creates the standards required over time, piece by piece, and issues them in an ongoing programme that includes provider organisations, clinicians, vendors and other stakeholders. Of course some base level standards are required for things like data types, application interaction and so on. These should mostly be considered to be either to do with information interoperability, platform mechanics, and below that, languages and ontology. These base standards have to be chosen / developed and maintained extremely carefully, and wrong choices here can sink the whole enterprise.

The main aim of a health authority standards factory isn’t to just re-issue these base standards, It is to gradually build up an ongoing set of small specifications that act as standards over time, defining various APIs in the platform, content types, and eventually, active processes, workflows and business collaboration structures. It needs industry’s help in this, as well as that of clinical and other domain experts. And it’s a never ending enterprise – there will always be new content and workflows to contend with – thus, new APIs, apps and back-end requirements.

Principle: a platform is a process, not a product.

We need Ontologies

One component of the base standards referred to above is ontologies. There are two common views about ontologies in the health IT sector: one is a view that ‘ontologies’ are an academic idea that might one day have some relevance in the distant future. The other is that we have already taken care of ontologies with SNOMED CT.

This is another topic that I can’t deal with here in detail, so I’ll just state the essential justification: we need ontologies if we are ever to trust computers to reason with clinical data. What ontologies (can) provide are the definitional underpinnings of health data, so that computers don’t get mixed up between things like:

- an actual blood pressure and a target blood pressure

- an allergic reaction and an allergy

- a prognosis and a recommendation

- two different fractures and the same one

- a foetal heart rate and the mother’s heart rate

Ontologies are needed at various points in the platform infrastructure:

- as the basis for terminologies like SNOMED CT (which is being slowly converted to a proper ontological basis)

- as a basis for core commitments in software (e.g. what an ‘observation’ or an ‘order’ is)

- as a basis for building the main output of the standards factory: content, process, and API definitions

Making this happen doesn’t need many people, but it does need the right people in the right place, and a recognition on the part of the platform mandater of its importance.

Principle: a platform with no ontological underpinning is unlikely to support reliable semantic computing

The role of Open Source

Some readers might wonder why open source is not mentioned above. The main reason is that it is not part of the platform concept per se. Open source is primarily about models of collaboration and IP ownership. An open source community around a platform may increase the credibility of that platform, but it’s not needed for any technical purpose. Neither app developers nor back-end developers need to see each other’s source code for anything to work in a platform ecosystem. Whether, sociologically speaking, more developers might be attracted to a platform if it has some open source components is another question.

If this is true, then why the excitement about open source in places like the UK NHS? Well, one reason might simply be confusion to do with the difference between ‘open source’, ‘open systems’, and ‘open platforms’. However, there are at least two concrete reasons to see open source as useful in the platform economy: cost-sharing and agility. Open source can potentially reduce the amount of gross investment needed in some of the main components in the ecosystem, particularly in the back-end space. Consider the world today with Apache, and an alternative present with no Apache. In the latter case, it is very likely that there would be more commercial web server offerings from numerous companies, but not offering greatly different business value. Open source might make for fewer, better, or cheaper back-ends (it should be noted that big corporations fund most of the work on Apache, not private programmers). However, there is no guarantee of this. In the RDBMS market, the great majority of mission critical deployments are based on commercial closed source offerings. I would argue that credibility of technology and support is ultimately what enterprise customers pay for. (The drivers in academia are of course different, and a whole different argument can be made in that environment).

The second positive factor is agility: open source creates a structure of IP ownership that can cut straight through what would otherwise be complex legal and commercial boundaries, and allows developers to ‘get on with it’ where in a purely commercial ecosystem, this might not happen.

These positive effects of open source tend to be concentrated around the development and support aspect for software components, as shown here.

My view is that open source primarily matters around interoperability components – implementations of specific interface layers, whose sharing automatically pre-disposes the market to interoperability. However, I also think that whether high quality. secure back-end components are open source or not is largely irrelevant. What matters is their compliance and performance. Further, we need to remember that large parts of the IT layer in health are no longer software, but ontologies, terminologies, archetypes, computable guidelines and other ‘model artefacts’. These are by and large open source, already, or something close to it.

There is another complex angle to do with open source that complicates the argument, and that is to do with original investment. Some open source is created from a very early point on as open source, and with investment on that basis. This kind of open source is uncontroversial. Other things that are open source today – e.g. the Eclipse tooling platform – were originally built as commercial products, and dropped as a ‘code bomb’ on the market. Very large companies such as IBM can afford to do this, as they have a global sales and marketing network that can exploit the new situation. However, small companies with significant IP but limited network cannot do the same, and can in fact be economically damaged by unilaterally open sourcing their wares. In this case, other bodies – perhaps government, or sponsor corporations – need to recognise the need and to help the original innovator recoup some of the costs, and have an economic future.

Principle: open source interoperability components are likely to be very beneficial in a platform market

Getting Developers on Board

One of the important sociological angles on the platform concept is getting buy-in. If there are (or appear to be) multiple competing platforms that do more or less the same thing, developers will be the ones who decide what gets taken up. So the question is: what works for developers? The Marc Andreessen article mentioned earlier gives some of the reasons. I would summarise the criteria as follows:

- the platform is easy to understand and well documented

- it is easy to start developing with it, which means available downloads, demonstrator server sites, and SDKs

- it solves more needs rather than less, i.e. a platform that does more, and more flexibly, will gain followers.

Some software platforms are easy to use and bring a modicum of power, e.g. Ruby-on-Rails. Others are super-powerful, but can’t be handled by the average programmer, e.g. Eclipse. Nevertheless, for good or for bad, the overriding factor these days (in which, according to some cynics, nobody has an attention span longer than needed to read a tweet) is programmer usability.

Principle: developer usability can make or break a platform’s uptake

Conclusions

My conclusions are as follows:

- the platform concept is both an economic and a technical concept

- it is essential to get the specifications and technical architecture right – poor choices can prevent lift-off

- a platform that is technically good still has to be technically usable, or developers won’t use it

- open source can help, but isn’t the driver

- de jure standards are not always necessary, and are sometimes downright harmful, due to being unfit, and having glacial maintenance cycles

Ultimately what matters is open specifications that have industry credibility, and it doesn’t matter too much where they come from, but it does matter where they are going.

Mr. Beale, thank you for writing this excellent post. I don’t know any other document that describes the technical requirements of a generic healthcare IT platform so well. It is a pity that it was published in the (kind of) volatile blogpost format. This document should be published in a more permanent format, such as a book chapter or a paper.

Pingback: What is an ‘open platform’? | Woland’s cat | The Research Platform

What I am missing is a firm statement in the conclusion that answers the question in the title.

Let me give it a try.

An open platform is a platform-specification, there for everyone to use without having to deal patents or other legal blocks.

gray area’s

—————–

Is the Microsoft Office-platform an open platform?

It is based on ISO-standards, there is an API to create additional software, it is allowed to build document-creators/interpreters, like Open Office. There are patents, but Microsoft promised during the ISO-certification-process, it would not use them against users of the platform-specification.

Is iOS an open platform? Everyone can build software for it. The specifications, needed for software are published. But it is not allowed to build an iOS-like operating system. Apple will use their patents, copyrights and trademarks, as well as trade secrets and related rights also called “intellectual property” or IP, against you.

Is Android an open platform, it offers all rights that iOS offers, but it is also allowed to write an Adroid like OS, and it is even possible to use the original Android sourcecode for that, because it is open source.

————–

I think in these examples, that Android is an open platform, it is open source, and there is no IP to hinder you developing/using it in every way you wish.

I think the Microsoft Office-platform is an open platform, although it is not open source, you may use it as you like, and you may use their specifications to build your own software.

Is IOS an open platform? I don’t think so. You are only allowed to work inside the boundaries Apple dictates. Not all vendors in the iOS eco-system are treated equal. You can buy or exchange rights to do things.

—————

So, concluding.

To determine if a platform is an open platform, it does not have to be open source, although that helps. But is it is not open source, the creator must promise that there will be no patent-issues or other IP issues used against users of the platform.

Also the creator must promise that all users of the platform must have the same rights to use the platform. The creator must do these promises in an irreversible way.

The point about patents or other IP encumbrance is a good one. I didn’t think to address it specifically, but it is clearly part of the confidence factor in the platform specifier. A developer community would presumably expect the platform mandater to do the due diligence. If the platform specifier and the mandater are the same organisation, as in the case of IOS, Amazon, Google etc, then I agree there may be an issue.

I think in these cases, there is a not entirely relaxed tango between the developer communities and each big tech org. The big guy can change things, but if it goes too far, it knows the developers will become alienated and eventually go to a competitor. That dynamic alone probably safeguards the app platforms of iOS and Android to a reasonable extent.

I also like the point about equal rights, although I would like to see a more in-depth analysis to understand the problem better.

> The big guy can change things, but if it goes too far, it knows the developers will become alienated and eventually go to a competitor

That is the reason why an ISO/OASIS (or other standard) is a good thing to describe as an essential part of the open platform.

Example: MS OpenXML, the no-sue promise only applies to the ISO-standard. It is not allowed to use any other format from MS in the context of this open-platform promise, allthough, MS also made public available other (older) MS-formats, but there are, as far as I know, some legal restrictions which do not apply to OpenXML.

http://www.microsoft.com/openspecifications/en/us/programs/osp/default.aspx

“Microsoft irrevocably promises not to assert any Microsoft Necessary Claims against you for making, using, selling, offering for sale, importing or distributing any implementation to the extent it conforms to a Covered Specification”

OpenXML has become more or less an open standard, and the MS Office, when using this document-format, is an open platform. (But there are some legal problems when creating software under GPL.)

Before this, developers needed MS-dll’s to create Office-applications which could handle Office-documents. And those dll’s were only to get when buying MS-Office.

Now, there are all kind of OpenXML handling libraries for all kind of platforms, which do not use Microsoft libraries.

For example: With cooperation of Microsoft there has come an Java-API for Office-documents, published by Apache.

http://blogs.msdn.com/b/interoperability/archive/2009/05/15/open-xml-made-easier-for-java-developers-with-apache-poi.aspx

Pingback: Why most health IT procurement fails and how to fix it | Woland's cat

> I also like the point about equal rights, although I would like to see a more in-depth analysis to understand the problem better.

I am not a lawyer, but I think that a platform is only open when it is open to anyone.

Thanks Tom

Great post indeed.

Indeed its important this article is out in the widen open on a blog, no doubt it should/will make it into a book someday.

Am generally in agreement with all here, lets say that both of us very much agree that a blend of open platform (open specs e.g. openEHR) and open source is needed in 21st Century Healthcare.

Could I extend the point about the advantages of the open source ingredient. I would add to your “two concrete” reasons.

Let me make 3 additional points, from an article I’ll reference from elsewhere. There are of course others…

http://opensource.com/government/12/7/open-source-not-limited-software

#1) Unconstrained innovation – ideas and ambitions can be shared by folk who are oceans apart.

I would contend that the lower the barriers to entry to a platform the lower the constraints for innovation. That brings advantages and disadvantages of course, but for maximum innovation I think one can make a case that open source is helpful.

#2) Transparent credibility -allowing immediate detailed scrutiny immediately boosts credibility.

This point helps with the very good fit between the medical professional and open source cultures, that I might briefly summarised as “publish or perish” ,i.e. doctors take a view that medical advances should be published for review by their peers.

Hence as a doctor I would want to share clinical content (e.g. archetypes) widely with my international colleagues.

Its also apparent that many software engineers are willing to open source their work, knowing that further related work and opportunity will come.

Famo.us might be this months example…one of just thousands of such examples from the software industry.

https://famo.us/

#3) Decentralized control – amendments and improvement can come from the bottom up

This point recognizes the inherent “complex adaptive system” nature of healthcare, where top down control is understood to be the wrong way to do business. Again there are pros/cons to this, but in business terms it is again about enabling innovation by giving up a degree of control. We’ve already acknowledged that no one vendor can handle a market like healthcare, so what better way to move up the value chain than collaborate on an open source platform?

I don’t think any of these 3 points are at odds with your “open platform” thinking, rather complimentary points I would suggest.

Thanks

Tony

Hi Tony,

Of course I agree with the motivation behind your comments. But the reality is different.

re #1, open source can potentially improve ‘visible’ innovation. But innovation doesn’t happen by accident – it requires a lot of work. That can only occur if architects and developers can be paid to do the work – and work together – as part of their day job. And they need proper requirements prior to that, which don’t appear by magic. None of this can happen on the platform side with people programming in their spare time, and isolated. On the apps side? Maybe a bit.

re #2, I don’t think the evidence supports this idea in general. In specific cases the source code of widely used IP like Apache, ssh, etc are inspected by people outside the development circle, but outside those cases, I think it’s only developers from within the original group, for which open source isn’t actually required. For anything specialised (e.g. medical software), that doesn’t have OS-level security or performance implications, I think it’s unlikely that ‘free open source scrutiny’ will occur. There’s too much learning involved, and no payback.

I think a lot of people are prepared to open source work they do in their spare time, for obvious reasons. But statistically very few are in a position to open source the software they work on in their day job.

re #3, no complex software gets built without organised design, and that only happens with organisation, planning and full-time workers. So for each piece of software to have any quality and to connect properly into the platform, you need quality design and development resource. For a small app, this might be one person in their spare time, but for anything else, we’re back to the problem of who pays.

The decentralised control mostly applies between development organisations, not inside them, and I completely agree that that is crucial to the platform ecosystem. But you only need mandated open APIs and data standards to achieve that, not open source.

This review of the Shweik & English book summarises some of the work that is needed to make even a small OSS project succeed. The reality is that most people underestimate this effort, and most OSS projects that are started are abandoned when the effort to sustain becomes too great.

There are other reasons open source isn’t more prevalent outside the well known big projects:

1. the successful big projects like Python, Ruby-on-Rails, Linux, Apache all have a specific feature: the user base is more or less the same as the developer base (IT pros). In health software it’s not like this on the app side. On the platform side it could potentially be, but … see reason #2

2. for a large effort like a ‘LAMP for health’ to succeed, significant funding and management are needed. I.e. it’s a commons problem. The market doesn’t deal with commons problems, so they have to be kick-started into place by an external force, either a government funding programme, or a tech giant like an IBM, or a consortium of tech giants.

There are small signs of this happening in a few places, but nothing like what is needed to make open source mainstream in a domain vertical like health. So far, huge amounts of central funding have been wasted in most countries (UK providing the best example) on the completely opposite of the kind of effort that would make things happen – in fact, the spending follows almost exactly the ‘procurement failure’ pattern documented in my next post.

The ‘complex systems’ analogy is an apt one, but what it tells us is that not much will happen until the overall IT economy is in a completely new operating point, where conditions for doing high quality, joined up software development across/between companies exist. To get there requires a system shock. I think we are a long way from that, because a new market operating point matching that description is actually a challenge to the fundamentals of capitalism itself, particularly w.r.t. the current approach to how company value is based on private IP value.

As desirable as it might be, I don’t think this is realistic as a first step; I think instead harnessing the power of existing development organisations (companies, trust IT depts, some academia, etc) in an integrated ecosystem is the way to go, where integration is achieved with open / common interfaces, data and knowledge standards.

In today’s economy, open source for major projects and tools will need ‘commons’ funding – either from central grants or from tech giants. If the domain really wants that to happen, its users need to do something about setting up the conditions for it.

I say all of this as someone whose most complex product has been open source from requirements to implementation (12 years now); the product is widely desired in industry (and its specification became an ISO standard in 2009); and yet most of the work has been done in my spare time. The market mechanisms to fund this kind of thing just don’t exist yet.

Pingback: Weekly Australian Health IT Links – May 14, 2014 – Auto Article - Weekly Australian Health IT Links - May 14, 2014

Pingback: Writing Scripts – certivwebjames

Pingback: Power to the People: Why Ownership Hurts Businesses and Open Platforms are the Future | Neromind

Could you expand on this? What is being changed about SNOMED CT and why is it necessary?

“as the basis for terminologies like SNOMED CT (which is being slowly converted to a proper ontological basis)”

This is not new; it’s the last 10 years during which experienced ontologists had a look at Snomed CT and provided some solutions to improve it based on work in BFO2, BioTopLite and other such ontologies.