[Image: from CatSalut website]

A slide on the future of HIT, from the openEHR conference hosted by the Catalan Health System (CatSalut), 06 June 2023, Barcelona.

WHAT

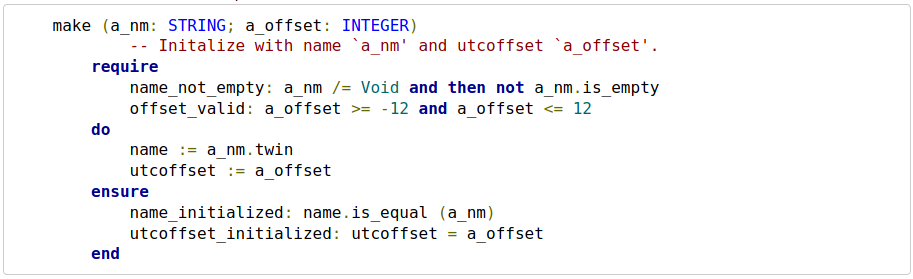

- knowledge-based – computational representation of foundational knowledge: ontologies, terminology

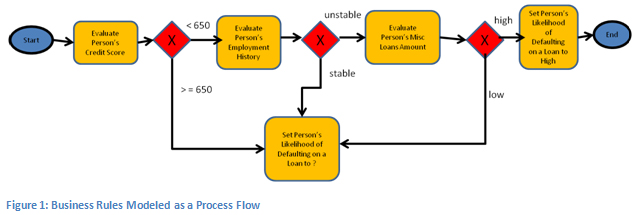

- model-based – computational representation of operational knowledge: information and process definitions

- process-based – patient care pathway as first order computational entity: derived from local or published computable guidelines

HOW

- Take all hidden semantics out of the software and DB schemas and represent them as first-order entities, created by domain experts, not IT people

- Realised in a services-based open platform, based on terminology, models, model-driven software, and care pathway execution

- Used to create a system for representing and tracking care pathways, and at each task and decision point, we have a transparent user/computer interaction – not a pile of hidden ‘business logic’

- Voice interaction – voice + models allows for constrained vocabularies (efficient for voice) and goal-oriented navigation rather (user-driven) not form-based navigation (developer-driven); documentation is created ‘on the way’

- Machine learning – use of AI created via supervised training of blank LLMs to perform patient-specific reasoning on the data

RESULT

Signs of success:

- Engineering: we get rid of applications – evolve to ‘task-oriented IT’

- Administrative: we get rid of ‘referrals’ – evolve to ‘straight-through care’

- Clinical: single-source-of-truth medications list, no more ‘med rec’ – evolve from institutional copies to a true ‘digital twin’

- Patient records: we get rid of separate clinical documenting – ‘documenting while doing’

- => a patient-centric care experience.